The invisible gap in therapy delivery

observation was rich, documentation was fragile.

Therapists observed rich behavioral evidence live, but by the time notes were written, timing was lost, context was compressed, and detail faded. Two gaps became clear: a Documentation Gap and a Goal Optimization Gap.

Field research included interviews with 5 therapy center owners and clinical leads, workflow observation across ABA and Speech Therapy, and analysis of 50+ live therapy sessions to identify where observation, documentation, and decision quality broke down.

Manual notes were retrospective and therapist-dependent, producing inconsistent reporting quality.

Goal updates were delayed and often memory-based, so session-level progress signals were missed.

A two-phase roadmap built around clinical trust

validation before optimization.

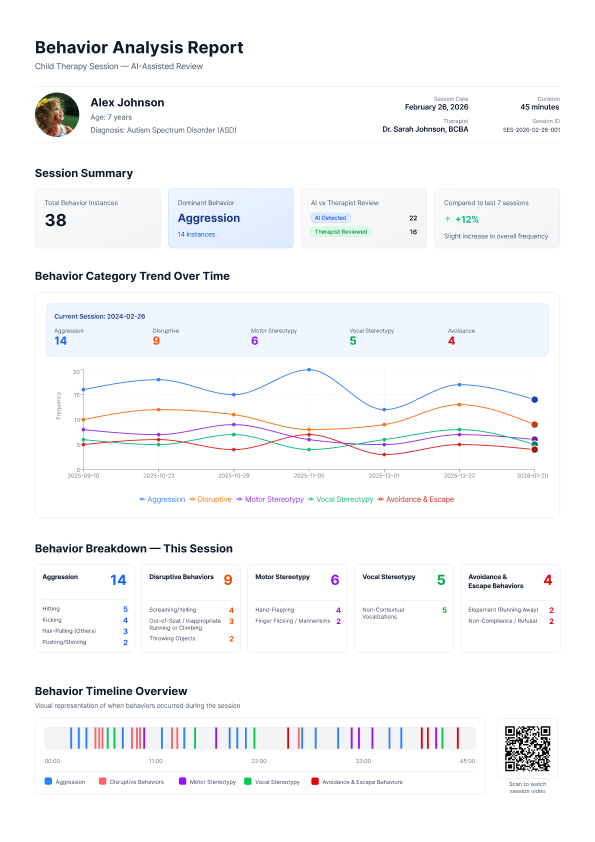

Phase 1 focused on structured session intelligence: converting session video into therapist-validated reports that clinicians could trust. The objective was not automating decisions, but making observation reproducible and defensible.

Phase 2 goal optimization was intentionally sequenced after Phase 1: cross-checking session behaviors with treatment plans, surfacing alignment gaps, and identifying plateau signals only once the behavioral baseline was reliable.

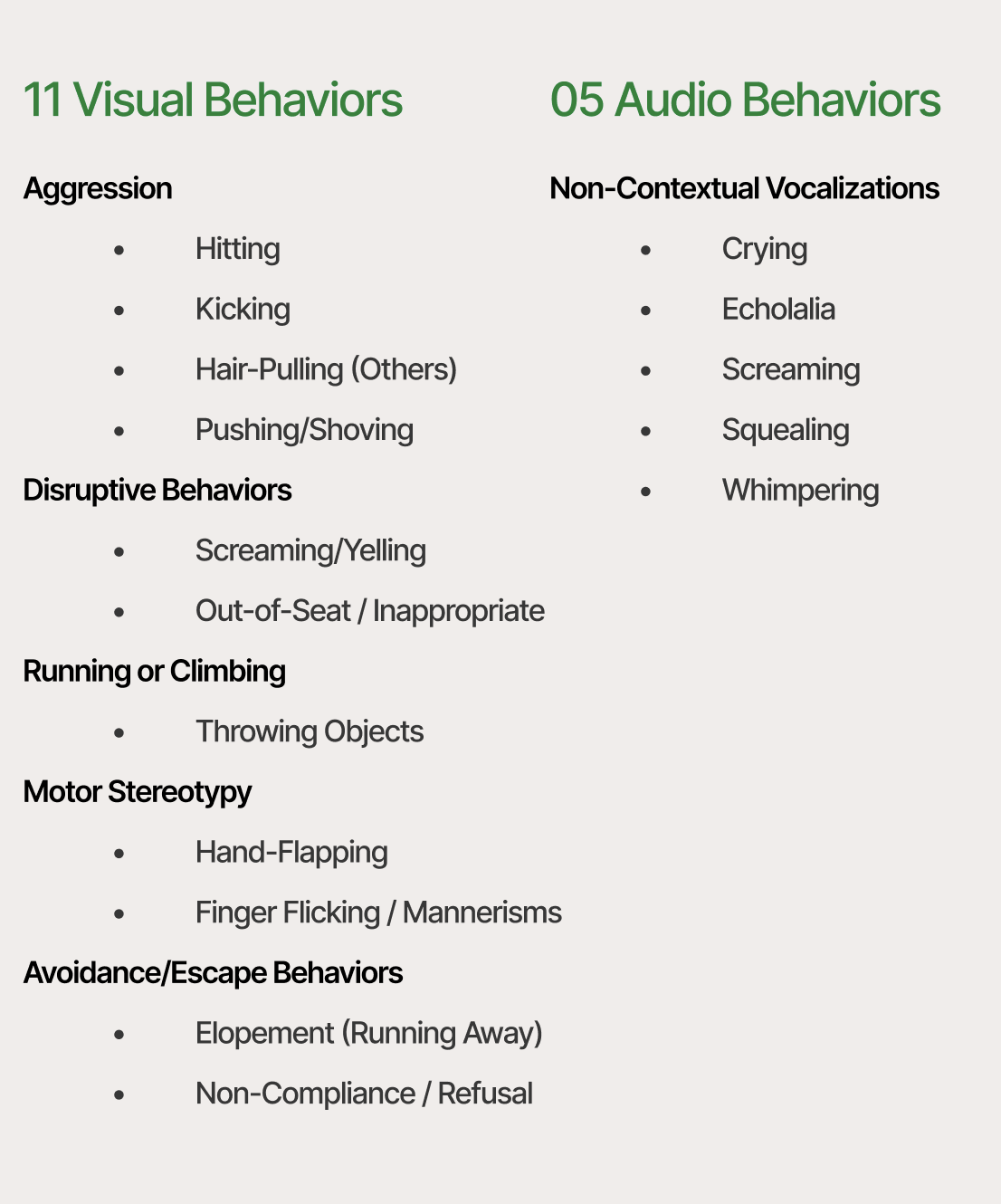

Before model training, a clinically grounded taxonomy was defined: 7 behavior categories and 32 behaviors. ML scope was staged deliberately, prioritizing higher-confidence behaviors first to protect therapist trust during pilot validation.

Reduce MVP to the core clinical loop for faster validation and lower delivery complexity risk.

Reframe AI strategy around privacy, regional deployment constraints, and model availability reality.

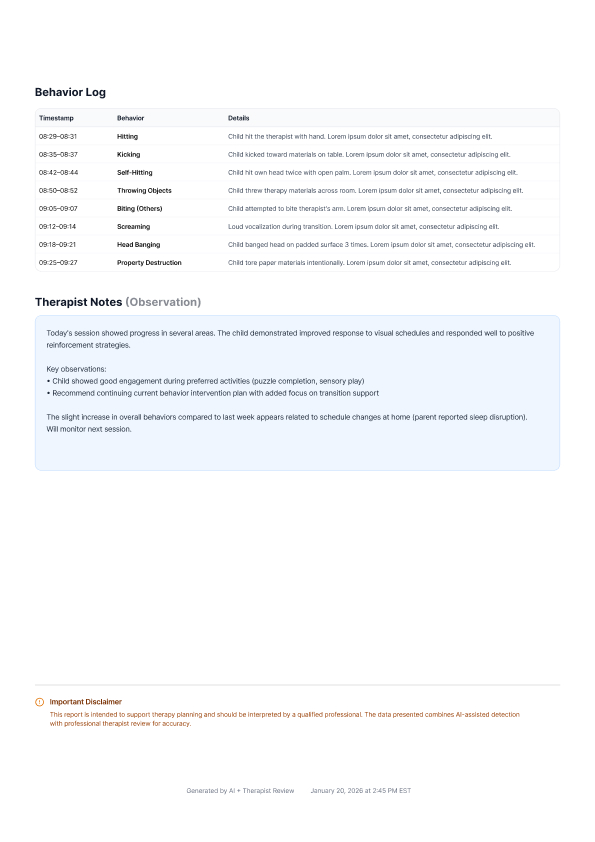

I deliberately kept diagnosis, goal changes, and clinical interpretation outside the automated layer. The product's job was to make session evidence easier to review, not to replace therapist judgment.

Upload, analyze, review, report

AI prepares the evidence; clinicians make the call.

Upload Session Video

Therapist uploads a recorded therapy session to the secure platform.

AI Analysis

Platform detects structured behavioral events and generates initial insights.

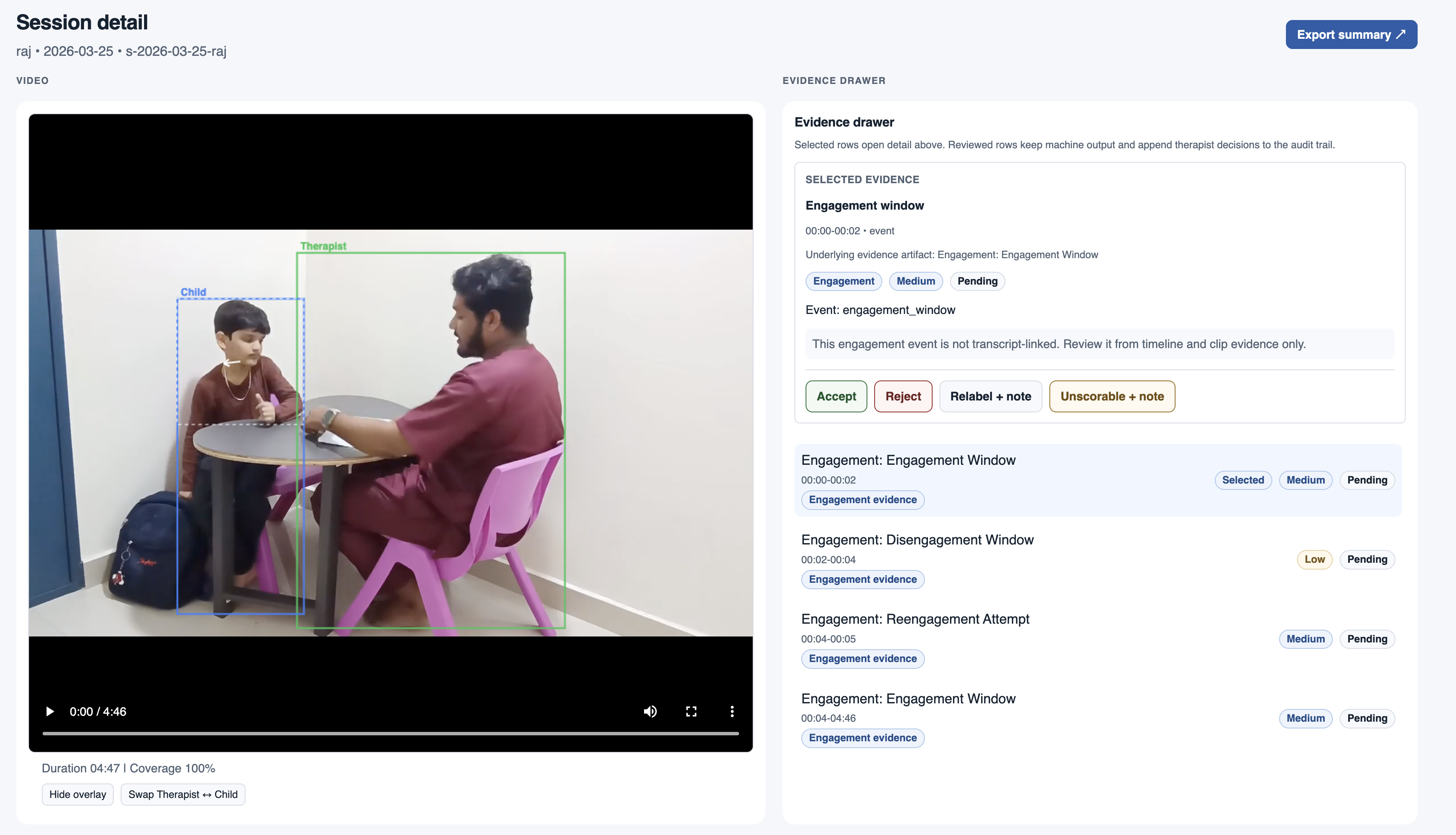

Therapist Review

Clinician reviews, edits, and validates all insights and recommendations.

Export & Share

Generate a professional PDF report for parents, insurance, or clinical records.

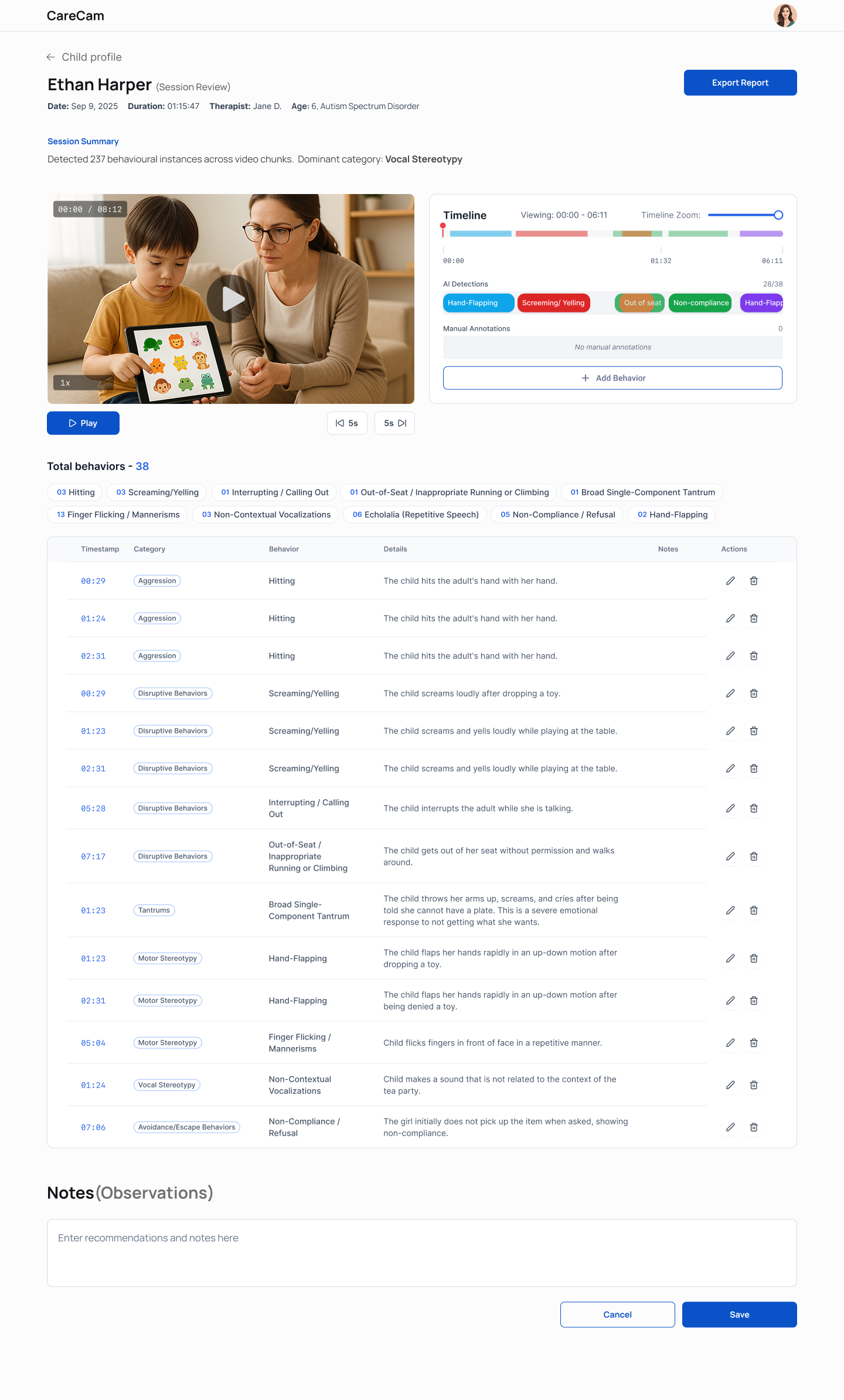

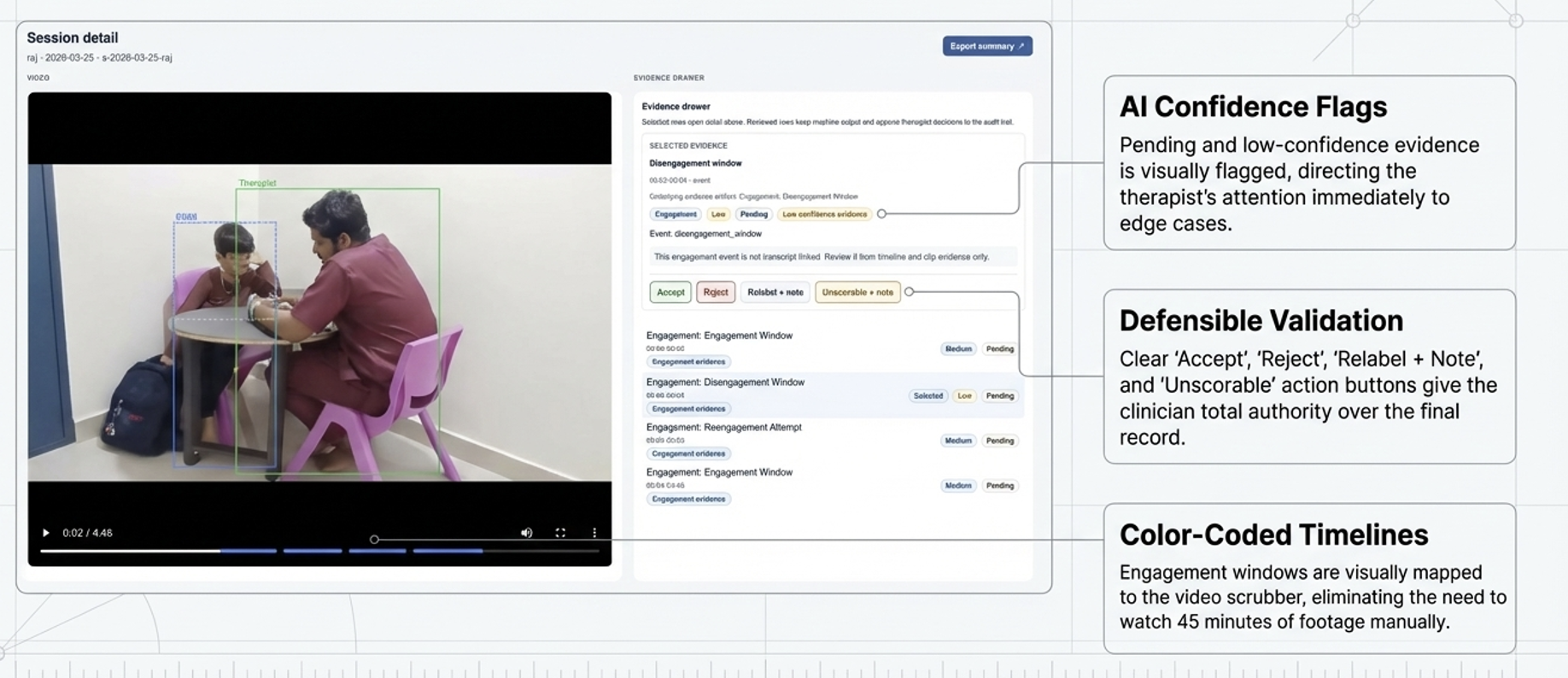

Accept / Reject / Relabel actions are core clinical controls, not secondary UI actions.

A behavior label without timestamp context is clinically weak; sequence defines interpretation.

From memory-based reporting to evidence-based review

documentation moved out of live therapy moments.

- • Manual and retrospective note workflows.

- • In-session note-taking interrupted therapy attention.

- • Quarterly reporting often reconstructed from memory.

- • Session became reviewable timestamped evidence.

- • Therapists stayed present in-session and reviewed post-session.

- • Reports generated from validated session data.

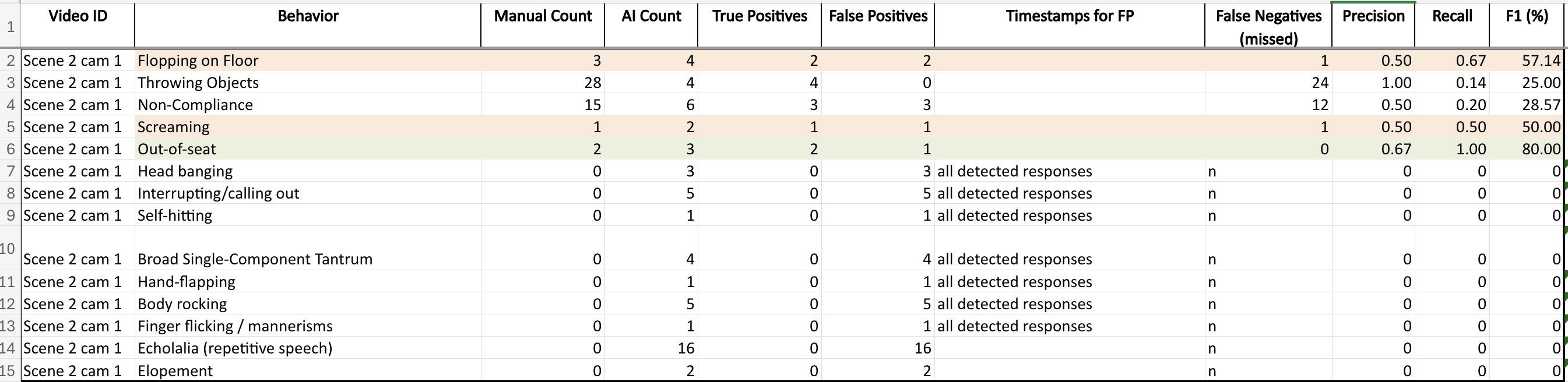

Therapist manual annotation was used as the benchmark for AI detections. Precision, recall, and F1 variation by behavior category directly influenced interface treatment and uncertainty communication.

In parallel, I independently scoped and shipped a skill-detection MVP end-to-end: framing, UX architecture, interaction design, and live ML integration. It validated the same core loop quickly: session signals in, clinician-reviewed outputs out.

View full build documentation

Pilot outcomes and platform signal

usable now, expandable by design.

Live pilot centers

Actively uploading session data.

Therapy sessions analyzed

Ground truth compared against therapist annotations.

Platform vision

Therapy, nursing, elderly, neonatal, ICU/rehab.

The same loop scales beyond therapy: upload session, review AI evidence, validate clinically, generate report. Taxonomy changes by domain; accountability pattern remains stable.

carecam.in

In clinical AI, interface decisions

are accountability decisions.

Model accuracy is not enough. Uncertainty must be visible where clinician decisions are made.

Therapist controls are not confirmations; they are responsibility boundaries inside high-stakes workflows.

If repeated, trust instrumentation should start earlier: overrides, relabel frequency, skips, and completion behavior.

- • Human-in-the-loop review at every critical decision node.

- • Temporal clarity over isolated labels.

- • Progressive disclosure for cognitive control.

- • Visible system state for trust and accountability.

CareCam proved that structured, therapist-validated evidence can replace retrospective memory workflows without sacrificing clinical agency.